You rolled out AI-powered coaching. Your team is using RolePlayAI. The tech is doing its thing.

But when the CFO asks, “What are we getting for this investment?” — you freeze.

Engagement metrics sound great until someone asks what they mean for revenue. Usage reports are impressive until leadership wants to know if reps are actually closing more deals. And “our sellers love it” doesn’t hold up in a budget meeting when you’re asking for more resources.

This isn’t a new problem — L&D and sales enablement teams have always struggled to connect training to business outcomes. But AI training has made it more urgent. The tools are powerful, the price tags are real, and executives want proof that the investment pays off.

Here’s the thing: the data is there. You just need to know what to measure and how to tell the story.

Why “everyone’s using it” doesn’t cut it anymore

Participation rates used to be the gold standard. If people showed up to training, that counted as success.

Not anymore.

Industry conferences in 2025 — from Ai4 to National Sales Conference and Training Industry AI Showcase — made one thing clear: executives are done with vanity metrics. They’re not interested in how many people logged into the platform or completed a module. They want to know if training changed behavior. If behavior changed outcomes. If outcomes moved the business forward.

Engagement is still important (you can’t impact what people don’t use), but it’s just the starting line. The finish line is revenue.

Sales leaders are under pressure to justify every dollar spent. Training budgets are no exception. If you can’t connect your AI coaching tools to pipeline, win rates, or deal velocity, you’re vulnerable when budget cuts come around.

What actually matters: connecting training to real business outcomes

Measuring AI training impact means tracking three layers: adoption, behavior change, and business results.

Start with adoption (but don’t stop there)

Adoption metrics tell you if the tool is being used — and how. Track things like:

- Active users (daily, weekly, monthly)

- Completion rates for coaching activities or roleplay scenarios

- Time spent in training vs. time spent selling

- Repeat usage (are reps coming back on their own, or do they need reminders?)

These numbers matter because they reveal whether your training is accessible, relevant, and sticky. Low adoption usually means one of three things: the tool is too complicated, it doesn’t fit into workflows, or people don’t see the value.

But high adoption alone doesn’t prove ROI. It just proves people are using the thing. You need to go deeper.

Track behavior change in the field

Behavior change is where AI training starts to show its value. This is about whether reps are actually applying what they practiced.

Look at metrics like:

- Objection handling success rates (before and after training)

- Discovery call quality scores (measured through Conversation Intelligence)

- Talk-to-listen ratios in customer conversations

- Use of specific talk tracks or methodologies taught in training

- Time to competency for new hires

Conversation Intelligence platforms can pull this data automatically by analyzing call recordings and scoring reps on specific behaviors. For example, if your team practiced handling pricing objections in RolePlayAI, you can measure how often those objections come up in real calls and how confidently reps respond.

Behavior metrics prove that training stuck. Reps aren’t just completing modules — they’re changing how they sell.

Connect the dots to revenue

This is the layer that gets executive attention. Business outcomes are what justify your budget and unlock future investment.

Readiness Scorecards in Brainshark (Bigtincan Readiness)

Focus on metrics like:

- Win rates (overall and by deal size)

- Average deal size

- Sales cycle length

- Quota attainment rates

- Pipeline velocity

- Ramp time for new hires

The goal is to draw a clear line from training to performance. For example: “Reps who completed the objection handling training in RolePlayAI closed deals 18% faster than those who didn’t.” Or: “New hires who used AI coaching reached quota two months earlier than the previous cohort.”

These are the numbers that matter in budget meetings.

How to structure your reporting so leadership actually listens

Once you’ve got the data, you need to package it in a way that makes sense to executives who don’t live in the sales enablement world.

Lead with the business outcome

Don’t bury the headline. Start your report with the metric that matters most to leadership — usually revenue, win rates, or time to productivity.

For example: “AI coaching reduced new hire ramp time by six weeks, accelerating $2.1M in pipeline contribution.”

Then work backward to explain how training drove that result.

Show before-and-after comparisons

Executives love proof that something changed. Use cohort comparisons to show what happened before and after you implemented AI training.

For example, compare reps who went through AI roleplay training vs. those who didn’t. Or compare Q1 performance (before training) to Q3 performance (after training).

Keep the comparison simple. Two numbers. Clear difference. Easy to understand.

Include qualitative feedback (but keep it short)

Numbers tell the story, but quotes from reps and managers add credibility. Include one or two sentences that capture how the training felt from the user’s perspective.

Something like: “I practiced handling budget objections in RolePlayAI three times before my call with the VP of Finance. When the objection came up, I knew exactly what to say. We closed the deal two weeks later.”

Anecdotes don’t replace data, but they make the data more relatable.

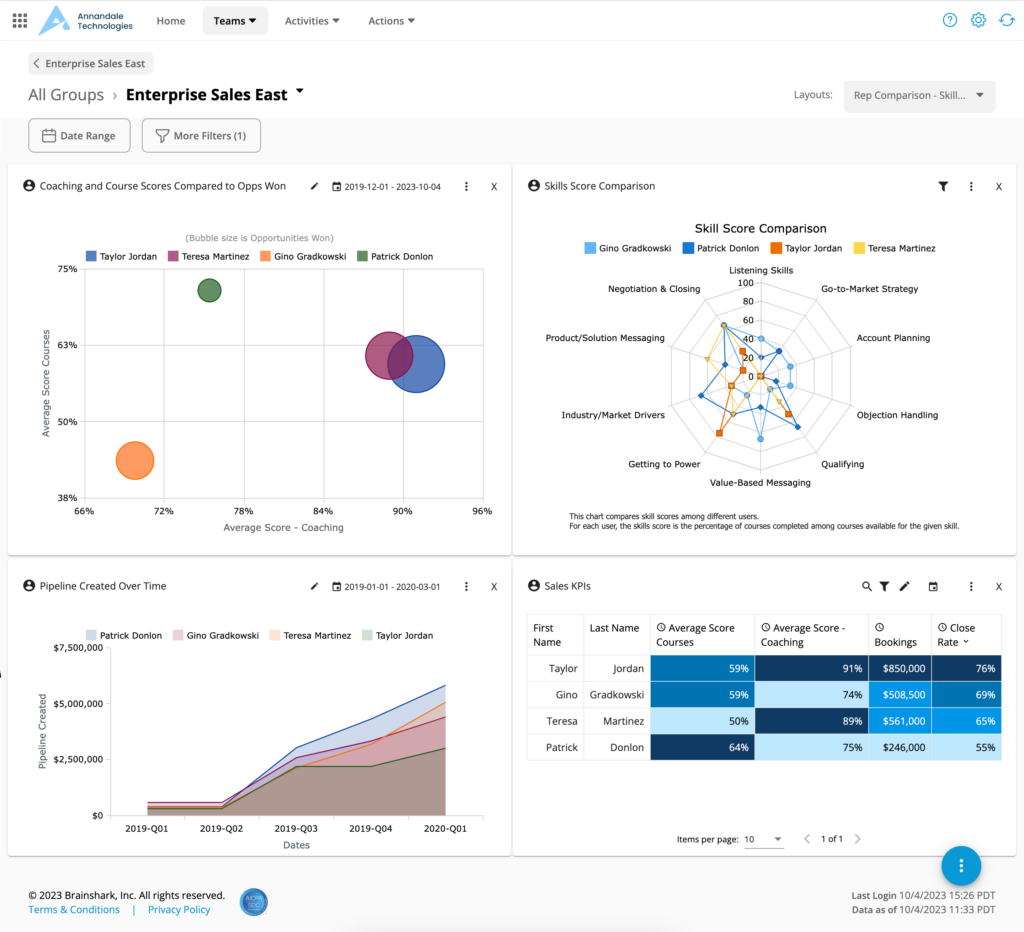

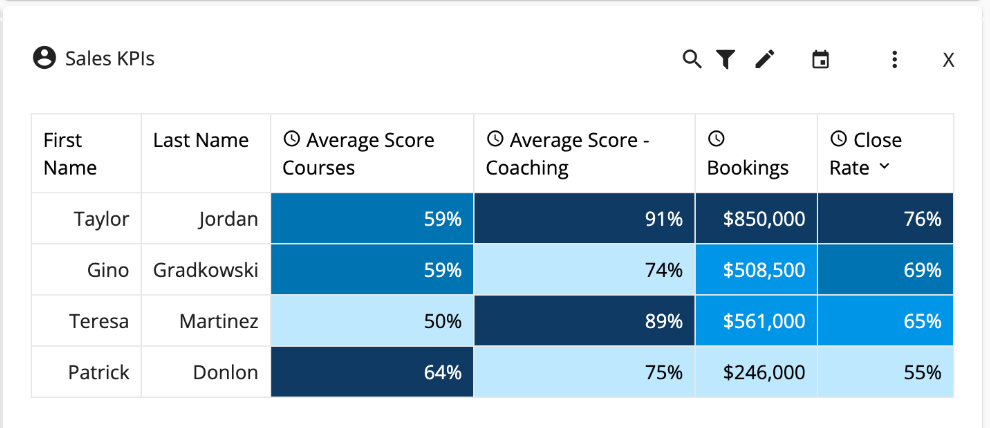

Make it visual

C-suite executives are busy. They’re skimming, not reading. Use charts, graphs, and one-pagers to make your insights scannable.

Show trends over time. Highlight the metrics that moved. Make it easy for them to see the impact at a glance.

Correlating CoachingAI and RolePlayAI scores with sellers Bookings and Close Rates in Scorecards

Common pitfalls (and how to avoid them)

Even with good data, reporting can go sideways. Here are the most common mistakes we see sales enablement teams make — and how to avoid them.

Mistake 1: Measuring too many things

You don’t need to track every possible metric. Focus on the three to five that matter most to your business. More metrics = more confusion.

Pick the ones that directly connect to your company’s goals. If leadership cares about shortening the sales cycle, measure cycle length. If they care about new hire productivity, measure ramp time.

Mistake 2: Waiting too long to measure

Don’t wait six months to pull your first report. Set up regular check-ins (monthly or quarterly) to track progress and make adjustments.

Frequent reporting also helps you catch problems early. If adoption is low or behavior isn’t changing, you can fix it before the annual budget review.

Mistake 3: Forgetting to celebrate wins

When the data shows improvement, share it. Send updates to leadership. Recognize the teams and individuals who drove results. Wins build momentum and make it easier to secure future investment.

Sales enablement teams often assume their work speaks for itself. It doesn’t. You have to tell the story.

Your AI training already has impact — now prove it

Most sales enablement leaders already know their AI training is working. Reps are more confident. Conversations are getting better. Deals are closing.

The missing piece isn’t the impact — it’s the measurement.

You don’t need a PhD in data science to prove ROI. You just need to connect the right metrics to the outcomes your executives care about. Track adoption. Measure behavior change. Tie it to revenue. Package it clearly. Share it often.

When you do, AI training stops being a “nice to have” and starts being a strategic priority.